Lean Startup Series: Vanity Metrics vs. Actionable Metrics

Metrics and measurement are at the heart of the lean startup approach and agile methods of product development. Yet measurement alone is not enough – success comes from measuring the right things. That’s the difference between vanity metrics and actionable metrics.

Table of contents

Here at Boldare, we see successful digital product development as both an art and a science. To achieve elegant, functional code or a seamless and intuitive UI takes a high degree of flair, but the process also requires an element of scientific rigor, devising and testing hypotheses, and – importantly – going with what the results tell us, not what we wish they’d tell us.

That’s how we understand the product owner’s concept and the users’ needs and can create that elegant code and intuitive UI.

Yes, building a successful product is both art and science. But how exactly do you define “successful”? That’s another of the more scientific parts: devising metrics and measures, knowing what data you need to collect, and where from. All of which, done correctly, will tell you (prove to you) when your product is a success. And what’s more, these metrics and resulting data will prove useful to the marketing of the product.

See other articles from the Lean Startup Series:

- Lean Startup Series: Validated Learning

- Lean Startup Series: Innovation Accounting

Lean startup metrics

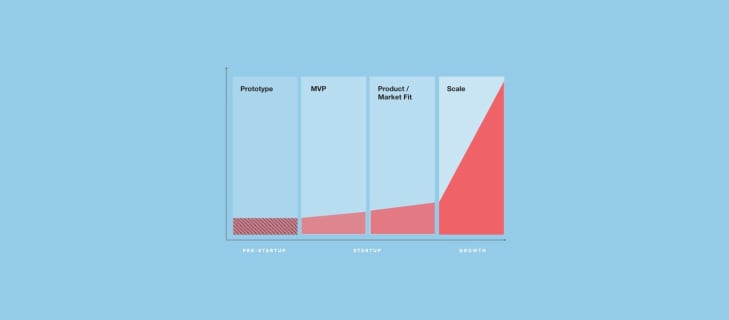

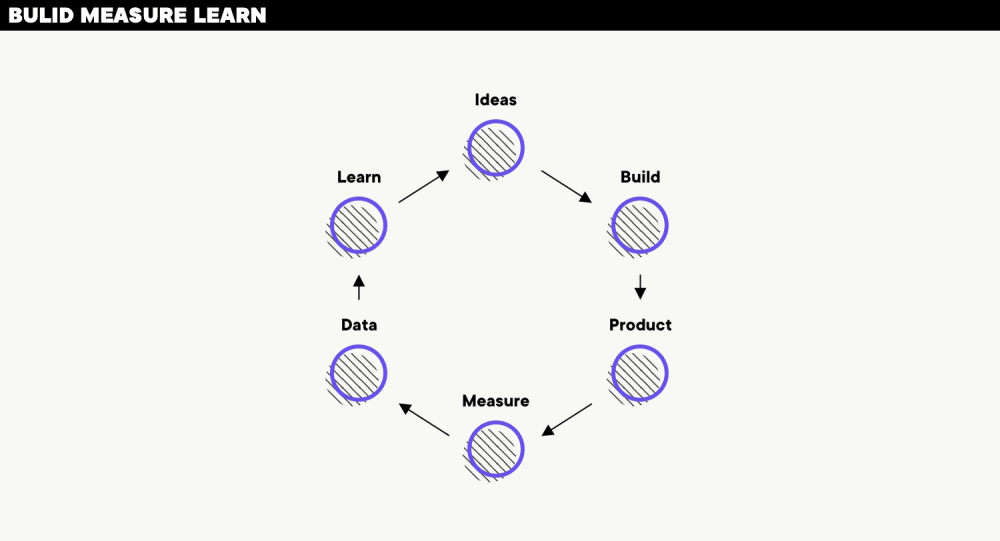

At Boldare, we use a combination of the lean startup approach and the agile scrum framework to structure and guide our digital product development. Central to lean startup is the concept of Build-Measure-Learn.

- The Build phase includes not only building the product (or prototype or MVP) but also setting up hypotheses to test the product concept and likely reception from users. Those hypotheses include lean startup metrics, success criteria that give a clear reply to the question: What does success look like?

- In the Measure phase, we gather information and feedback from users responding to the version of the product being tested. The information that we collect is determined by the metrics set previously.

- This data, and the conclusions we can draw from the test product’s performance against the metrics, is considered in the Learn phase, leading to a deeper understanding of the concept and product requirements, and probably to a new hypothesis to be tested.

However, as Eric Ries, author of “The Lean Startup” has pointed out, there are vanity metrics and there are actionable metrics.

Vanity Metrics vs. Actionable Metrics

A vanity metric is like a distorting mirror– you might like what you see in it but it’s not a true picture, it’s just your vanity. After your product test, you probably have some impressive numbers but either they’re not relevant to the hypothesis or they’re not telling you anything you need to know to improve your product – either way, vanity.

Classic examples of vanity metrics in a lean startup might be:

- Number of hits on a webpage.

- Number of downloads of an app.

Yes, your new webpage had a lot of hits but what’s a “hit” anyway? How many people is that? What does it tell you about their experience on your page? What can you do to improve that experience? Where do those hits come from? What did it cost to generate those hits? How do you generate more? The answers to all these questions are: Who knows?

Basically, vanity metrics might be great for PR (so long as no one questions them too closely) but the information they provide is just smoke and mirrors.

On the other hand, an actionable metric provides the product development team with genuinely useful information. An actionable metric is often linked to specific, repeatable tasks or features in a way that tells you how you might improve those tasks or features.

Sales could be an example of an actionable metric. When designing a new feature, simple A/B testing of the product (the existing version contrasted with the version with the new feature) can use a sales metric to establish user/customer response to the feature.

The data from that metric can point firmly in several directions, including building a new feature on general release, re-working the feature for better customer appeal, or abandoning the feature and looking for an alternative (more popular or needed) improvement to make.

>>> See how scrum can boost your software development projects

Why vanity metrics are dangerous

Once again, Eric Ries offers some insight. What he’s observed is that when vanity metrics are in use, the lack of clarity about what has caused an improvement in the figures means that people often attribute the improvement to their part of the project (everybody’s vanity at work). Likewise, should performance against the metric worsen, it’s definitely due to somebody else’s work.

Continue long enough and watch the negative impact on the overall quality of your teamwork.

In a sense, this is another distinction between the two types of lean startup metrics.

Vanity – nobody knows what caused the change in numbers so everyone has an opinion on who deserves the credit or blame.

Actionable – it’s crystal-clear what the metric is measuring, opinions aren’t necessary to understand it.

In other words, long-term use of vanity metrics is not only bad for the health of the product development project but also for the health of the organization.

A good metric is actionable – what else?

An actionable metric is specific, linked to the hypothesis under test, and produces data results (good or bad) that are unmistakable in their meaning. It tells you what outcomes come from which product features or changes.

As well as actionable, a metric should be accessible. Not only in terms of being clearly understandable but also in the sense that the data is widely and easily available. In lean startups, metrics and reports are not the sole province of managers and supervisors - it’s just not how these businesses work.

What’s more, that actionable metric should be auditable; i.e. the results or report can be generated from the source data by any member of the project team.

Keeping metrics and data open and transparent ensures that team members are defined by their roles and skills (what they bring to the table) and not their ‘level of clearance’.

Finally, good actionable metrics are finite, they have a shelf life so don’t become attached. If a good metric is tied up with testing a hypothesis, then once the test is done and a credible result achieved, the project moves on to the next hypothesis and the next version of the product. Then, once the product is launched, the metrics may change over time as the product ‘matures’ and targeted marketing becomes the priority as the available data increases.

For example, for a young or in-development product, you may be restricted to data such as:

- Social media shares, downloads, followers, active members, reviews, etc.

Once the product is established and starts to feel the effects of marketing, you might want to look at what you can do with:

- Time on site, conversion rate, sales, revenue, customer satisfaction, etc.

And a mature, well-known product might benefit from metrics that draw on data such as:

- New members, lost members, profit, revenue per customer, costs of production, churn rate, retention, etc.

These are only few examples of actionable metrics. Remember, every project is unique!

Basic principles of actionable metrics

- Quality not quantity – Don’t swamp your product project with data. Focus on the right data. One actionable metric that tells you something useful about the product-in-development is more valuable than a dozen feelgood vanity metrics.

- People – a tenet of lean startup states: “Metrics are people, too.” This means that you need to know where the data is coming from. Can it be tracked back to the individuals generating it? Apart from personalizing the process and maintaining a focus on users, there’s a practical advantage: if there’s any doubt about the meaning of the data, you know whom to ask.

- Measure only what you need – The data available, even connected with a small product change, can be overwhelming. Measure what you need to prove or disprove the hypothesis and no more. It’s easy to get lost in irrelevant information (remember the example of number of hits on a webpage?)

Actionable metrics Example - The story of Boldare

Boldare is a combination of two sister companies, XSolve and Chilid. The idea behind building this new entity was to offer the full range of software design and development services. Naturally, we used our usual product development processes, including hypotheses and metrics.

One of our initial hypotheses in the project was:

“The client is choosing a product development company over a software development company”.

To test this hypothesis, we developed two MVP versions of a boldare.com website to test what was appealing to our clients. To narrow the scope and focus in on useful data, we focused on what was driving clients’ choices, devising five sub-hypotheses:

- The client chooses a team building product

- The client chooses a team building software

- The client is making the decision about choosing the software or product team unconsciously

- The client is not identifying the product offer

- The client is not identifying the software offer

These specific statements were easily tested by a series of scripted interviews with users and clients. Not only did this mean the data gathered was directly relevant to the hypothesis, but the ‘people’ element was preserved by being able to clearly identify the individual sources of the answers to the questions.

The results showed that our ‘product offer’ was well-received and was ready to go ahead, but users weren’t engaging with the ‘software offer’, prompting further development work.

Actionable Metrics for better product

Metrics are key to the Build-Measure-Learn principle of lean startup. However, those metrics must be actionable; i.e. relevant to the product and the hypothesis being tested by the project team.

Actionable metrics will drive the development project to a successful conclusion and on into the product marketing phase, while so-called vanity metrics involve collecting data that ultimately cannot be used to further the product development process.

The final word goes to Eric Ries: “The only metrics that entrepreneurs should invest energy in collecting are those that help them make decisions.”

>>> Need custom software solution? Explore our agile-powered software services.

Share this article: